In a significant move to bolster online safety for younger users, Google has announced the deployment of a machine learning model designed to estimate users’ ages. This initiative aims to provide age-appropriate experiences across Google’s platforms, including YouTube, by analyzing user data to identify and protect underage individuals.

The new system utilizes machine learning to assess various data points, such as site visits, video watch history, and account longevity, to estimate a user’s age. When the model suspects that a user is under 18, it adjusts their account settings accordingly and prompts for age verification through methods like a selfie, credit card, or government ID. This approach ensures that safety measures, including SafeSearch and restricted content, are applied to accounts identified as belonging to minors.

This development comes in response to increasing concerns about online child safety and aligns with legislative efforts such as the Kids Online Safety Act (KOSA) and the proposed updates to the Children’s Online Privacy Protection Act (COPPA 2.0). By implementing these measures, Google aims to create a safer online environment for younger users.

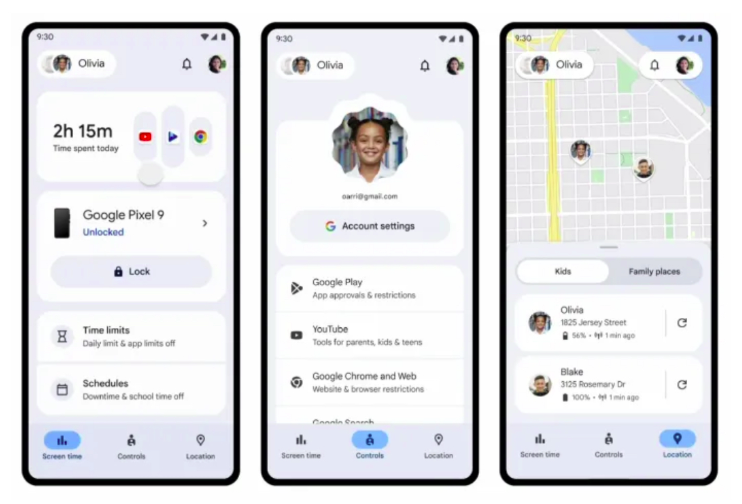

In addition to age detection, Google is enhancing parental controls through its Family Link platform. These new features allow parents to monitor their children’s smartphone usage more effectively. Parents can track screen time, select accessible apps, and schedule limited phone functionality to pause notifications during specific hours, such as school or bedtime. They can also approve contacts and manage multiple devices simultaneously, providing a comprehensive toolset to ensure their children’s online safety.

Google’s initiative is part of a broader industry trend toward improving child safety online. Recently, Google, OpenAI, Roblox, and Discord collaborated to establish the Robust Open Online Safety Tools (ROOST), a non-profit organization aimed at enhancing child safety online. ROOST seeks to provide accessible core safety technologies and offer free, open-source AI tools for detecting, reviewing, and reporting child sexual abuse material. This collective effort underscores the industry’s commitment to accelerating innovation in online child safety.

These advancements reflect a growing recognition of the need for robust online safety measures, especially for younger users. As digital platforms continue to evolve, implementing effective age detection and parental control features becomes increasingly crucial to protect minors from exposure to inappropriate content and potential online threats.

Key Takeaway for Marketing Managers

For marketing professionals, particularly those operating in the Middle East region, these developments highlight the importance of adhering to age-appropriate content guidelines and being aware of the evolving digital landscape. With platforms like Google implementing stricter age detection measures, it’s essential to ensure that marketing strategies are compliant with these new protocols.

Marketers should consider the following actions:

- Review Content Strategies: Ensure that all marketing content is suitable for the intended audience, taking into account the enhanced age detection mechanisms that may restrict access for younger users.

- Stay Informed on Policy Changes: Keep abreast of updates to platform policies and legislative changes related to online safety to maintain compliance and uphold brand integrity.

- Leverage Parental Control Features: Understand the capabilities of tools like Google’s Family Link to better tailor marketing efforts that respect parental controls and user privacy.

By proactively adapting to these changes, marketing managers can ensure that their strategies remain effective and responsible, fostering trust and engagement among diverse audience segments.